Building an AI LEGO Sorting Machine: Raspberry Pi + OpenCV Tutorial

Last Updated: January 01, 2026

Supporting Free Content

When you purchase through our links, we earn a small commission at no extra cost to you. As an Amazon Associate and eBay Partner, this helps us keep bringing you expert LEGO® reviews and guides. Thank you for your support!

Breaking News

Had some spray paint on hand…thoughts?

r/ninjago

•

33 minutes ago

Railroad tie testing

r/LEGOtrains

•

about 2 hours ago

Just like the movies I with he had the logo. That being said, this is still the best venom head design we’ve ever had.

r/legomarvel

•

about 5 hours ago

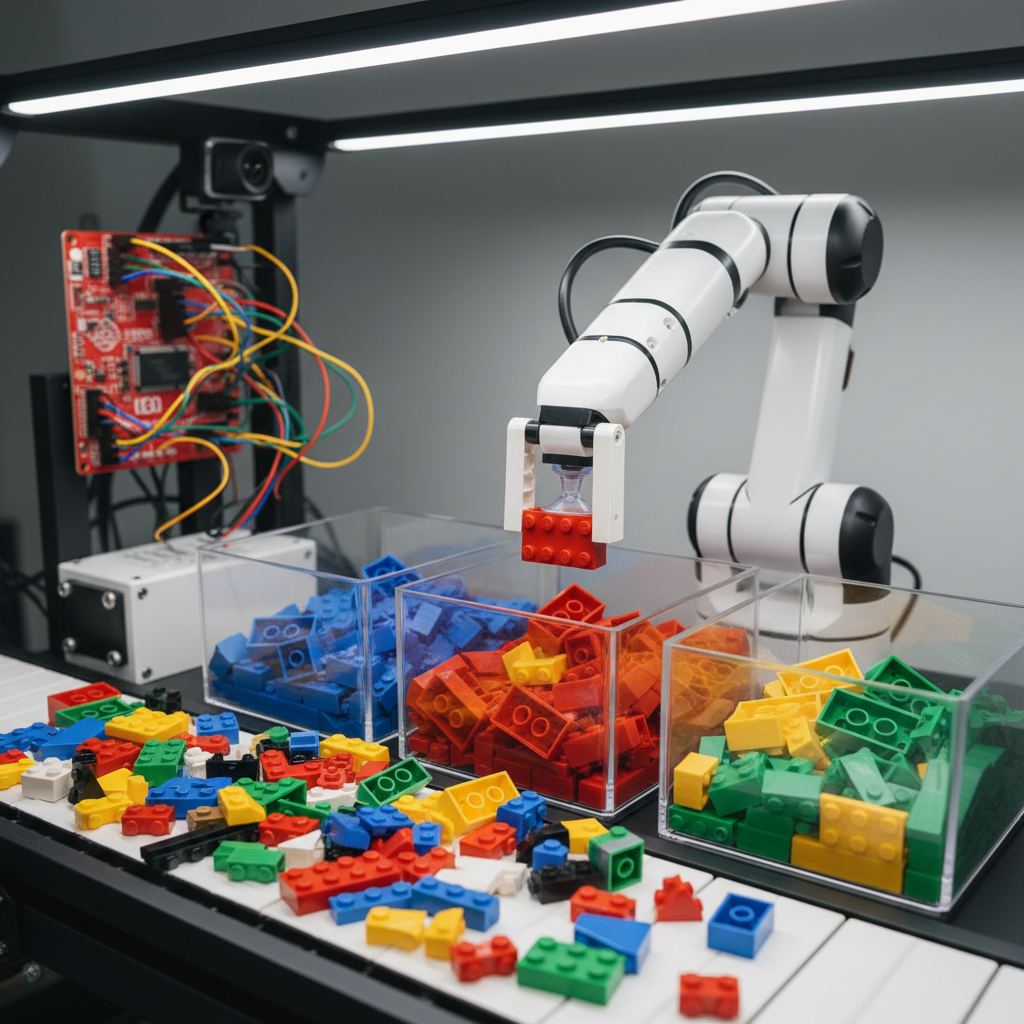

The automated LEGO sorting machine is the "holy grail" project for LEGO hobbyists. This guide covers building a complete vision-based sorter using Raspberry Pi, OpenCV for image processing, and optionally TensorFlow Lite for AI classification. Parts are identified by shape and color, then routed to the correct bin.

Table of Contents

- System Architecture

- Hardware Setup

- Vibratory Feeder

- Computer Vision Pipeline

- Classification Methods

- Sorting Mechanism

- Complete Code

System Architecture

┌─────────────┐ ┌─────────────┐ ┌─────────────┐

│ LEGO Bulk │ ──▶ │ Vibratory │ ──▶ │ Conveyor │

│ Hopper │ │ Feeder │ │ Belt │

└─────────────┘ └─────────────┘ └──────┬──────┘

│

▼

┌─────────────┐

│ Camera │

│ (Pi Cam) │

└──────┬──────┘

│

▼

┌─────────────┐

│ Raspberry │

│ Pi 4 │

│ OpenCV + │

│ TF Lite │

└──────┬──────┘

│

▼

┌─────────────┐

│ Build HAT │

│ + Servo │

└──────┬──────┘

│

┌────────────┬──────┴──────┬────────────┐

▼ ▼ ▼ ▼

┌────────┐ ┌────────┐ ┌────────┐ ┌────────┐

│ Bin 1 │ │ Bin 2 │ │ Bin 3 │ │ Bin 4 │

│ (Red) │ │ (Blue) │ │ (Bricks)│ │ (Plates)│

└────────┘ └────────┘ └────────┘ └────────┘

Hardware Setup

Bill of Materials

| Component | Purpose | ~Cost |

|---|---|---|

| Raspberry Pi 4 (4GB) | Main processor | $55 |

| Pi Camera Module 3 | Image capture | $25 |

| Build HAT | Motor control | $25 |

| LEGO Technic Motor (Large) | Conveyor drive | $15 |

| LEGO Technic Motor (Medium) | Feeder vibration | $12 |

| Servo motor (SG90) | Sorting gate | $3 |

| White LED strip | Consistent lighting | $10 |

| White acrylic sheet | Background | $5 |

Camera Positioning

Side View:

┌─────────┐

│ Camera │ ← 15-20cm above belt

└────┬────┘

│

▼

═══════════════[BRICK]═══════════════ ← White conveyor belt

│

─────────────────────┴───────────────── ← LED strip underneath (backlit)

Vibratory Feeder

The feeder separates clumped bricks and delivers them single-file to the conveyor.

How It Works

- Motor with eccentric weight creates vibration

- Angled ramp directs bricks toward conveyor

- Vibration separates stuck-together pieces

Building the Eccentric Weight

Motor Output ──┬── [Axle]

│

└── [Off-center Weight]

│

Rotation creates

vibration forceFeeder Control Code

from buildhat import Motor

feeder_motor = Motor('A')

def start_feeder(intensity=50):

"""Start vibratory feeder at given intensity (0-100)"""

feeder_motor.start(speed=intensity)

def stop_feeder():

feeder_motor.stop()

def pulse_feeder(on_time=0.5, off_time=0.5, cycles=10):

"""Pulse feeder to help separate stuck bricks"""

for _ in range(cycles):

feeder_motor.start(speed=80)

time.sleep(on_time)

feeder_motor.stop()

time.sleep(off_time)Computer Vision Pipeline

Step 1: Capture Image

import cv2

from picamera2 import Picamera2

# Initialize camera

picam2 = Picamera2()

config = picam2.create_still_configuration(main={"size": (640, 480)})

picam2.configure(config)

picam2.start()

def capture_frame():

"""Capture a frame from the camera"""

frame = picam2.capture_array()

return cv2.cvtColor(frame, cv2.COLOR_RGB2BGR)Step 2: Detect Part Contour

def detect_part(frame):

"""Detect LEGO part against white background"""

# Convert to grayscale

gray = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY)

# Threshold (white background = high values)

_, thresh = cv2.threshold(gray, 200, 255, cv2.THRESH_BINARY_INV)

# Find contours

contours, _ = cv2.findContours(thresh, cv2.RETR_EXTERNAL,

cv2.CHAIN_APPROX_SIMPLE)

if not contours:

return None

# Get largest contour (the LEGO part)

largest = max(contours, key=cv2.contourArea)

# Filter out noise (too small)

if cv2.contourArea(largest) < 500:

return None

return largestStep 3: Extract Features

def extract_features(frame, contour):

"""Extract shape and color features from detected part"""

features = {}

# Bounding box

x, y, w, h = cv2.boundingRect(contour)

roi = frame[y:y+h, x:x+w]

# === SHAPE FEATURES (Hu Moments) ===

moments = cv2.moments(contour)

hu_moments = cv2.HuMoments(moments).flatten()

features['hu_moments'] = hu_moments

# Aspect ratio

features['aspect_ratio'] = w / h if h > 0 else 0

# Solidity (area / convex hull area)

hull = cv2.convexHull(contour)

hull_area = cv2.contourArea(hull)

features['solidity'] = cv2.contourArea(contour) / hull_area if hull_area > 0 else 0

# === COLOR FEATURES (HSV Histogram) ===

hsv = cv2.cvtColor(roi, cv2.COLOR_BGR2HSV)

# Create mask from contour

mask = np.zeros(roi.shape[:2], dtype=np.uint8)

shifted_contour = contour - [x, y]

cv2.drawContours(mask, [shifted_contour], 0, 255, -1)

# Calculate histogram

hist = cv2.calcHist([hsv], [0, 1], mask, [30, 32], [0, 180, 0, 256])

hist = cv2.normalize(hist, hist).flatten()

features['color_hist'] = hist

# Dominant color

mean_color = cv2.mean(hsv, mask=mask)

features['dominant_hue'] = mean_color[0]

features['dominant_sat'] = mean_color[1]

return featuresClassification Methods

Method 1: Rule-Based (Simple)

def classify_by_color(features):

"""Simple color-based classification"""

hue = features['dominant_hue']

sat = features['dominant_sat']

if sat < 50:

return "white" if features['dominant_val'] > 200 else "black"

elif hue < 10 or hue > 170:

return "red"

elif 10 <= hue < 25:

return "orange"

elif 25 <= hue < 35:

return "yellow"

elif 35 <= hue < 85:

return "green"

elif 85 <= hue < 130:

return "blue"

else:

return "unknown"

def classify_by_shape(features):

"""Classify by aspect ratio and solidity"""

ar = features['aspect_ratio']

sol = features['solidity']

if 0.9 < ar < 1.1 and sol > 0.9:

return "1x1_brick"

elif 1.8 < ar < 2.2 and sol > 0.85:

return "2x1_brick"

elif ar > 3 and sol > 0.9:

return "plate"

else:

return "unknown"Method 2: Database Matching

import numpy as np

from scipy.spatial.distance import cosine

# Pre-computed feature database

KNOWN_PARTS = {

"3001_red": {"hu_moments": [...], "color_hist": [...]},

"3002_blue": {"hu_moments": [...], "color_hist": [...]},

# ... more parts

}

def match_to_database(features):

"""Find closest match in database"""

best_match = None

best_score = float('inf')

for part_id, known_features in KNOWN_PARTS.items():

# Compare Hu moments

hu_dist = np.linalg.norm(

features['hu_moments'] - known_features['hu_moments']

)

# Compare color histogram

color_dist = cosine(

features['color_hist'],

known_features['color_hist']

)

# Combined score

score = hu_dist * 0.4 + color_dist * 0.6

if score < best_score:

best_score = score

best_match = part_id

return best_match if best_score < 0.5 else "unknown"Method 3: TensorFlow Lite (AI)

import tflite_runtime.interpreter as tflite

import numpy as np

# Load model

interpreter = tflite.Interpreter(model_path="lego_classifier.tflite")

interpreter.allocate_tensors()

input_details = interpreter.get_input_details()

output_details = interpreter.get_output_details()

CLASSES = ["1x1_brick", "2x1_brick", "2x2_brick", "1x2_plate", ...]

def classify_with_ai(frame, contour):

"""Classify using TensorFlow Lite model"""

# Extract ROI

x, y, w, h = cv2.boundingRect(contour)

roi = frame[y:y+h, x:x+w]

# Resize to model input size

input_size = input_details[0]['shape'][1:3]

roi_resized = cv2.resize(roi, tuple(input_size))

# Normalize

input_data = roi_resized.astype(np.float32) / 255.0

input_data = np.expand_dims(input_data, axis=0)

# Run inference

interpreter.set_tensor(input_details[0]['index'], input_data)

interpreter.invoke()

# Get prediction

output = interpreter.get_tensor(output_details[0]['index'])

predicted_class = CLASSES[np.argmax(output)]

confidence = np.max(output)

return predicted_class, confidenceSorting Mechanism

Gate Sorter Design

Top View:

Conveyor Direction →

═══════════════════════════════════════════════

│ │

│ [GATE] │

│ / \ │

▼ / \ ▼

[Bin 1] [Bin 2]Servo Control

from buildhat import Motor

import RPi.GPIO as GPIO

# Using Build HAT motor for gate

gate_motor = Motor('C')

# Or using standard servo on GPIO

GPIO.setmode(GPIO.BCM)

GPIO.setup(18, GPIO.OUT)

servo = GPIO.PWM(18, 50) # 50Hz

servo.start(0)

def set_gate_position(bin_number):

"""Move sorting gate to direct part to specified bin"""

positions = {

1: 0, # Straight

2: 45, # Slight left

3: 90, # Left

4: 135 # Far left

}

angle = positions.get(bin_number, 0)

# For Build HAT motor

gate_motor.run_to_position(angle)

# For servo: convert angle to duty cycle

# duty = 2.5 + (angle / 180) * 10

# servo.ChangeDutyCycle(duty)

def sort_part(part_class):

"""Determine bin and actuate gate"""

bin_mapping = {

"red": 1,

"blue": 2,

"brick": 3,

"plate": 4

}

bin_num = bin_mapping.get(part_class, 1)

set_gate_position(bin_num)

time.sleep(0.3) # Wait for part to passComplete Sorting Loop

#!/usr/bin/env python3

"""LEGO Sorting Machine - Main Control Loop"""

import cv2

import time

from picamera2 import Picamera2

from buildhat import Motor

# Initialize hardware

picam2 = Picamera2()

picam2.start()

conveyor = Motor('A')

feeder = Motor('B')

gate = Motor('C')

def main():

print("Starting LEGO Sorter...")

# Start conveyor and feeder

conveyor.start(speed=30)

feeder.start(speed=40)

try:

while True:

# Capture frame

frame = picam2.capture_array()

frame = cv2.cvtColor(frame, cv2.COLOR_RGB2BGR)

# Detect part

contour = detect_part(frame)

if contour is not None:

# Extract features

features = extract_features(frame, contour)

# Classify

part_class = classify_by_color(features)

print(f"Detected: {part_class}")

# Sort

sort_part(part_class)

time.sleep(0.1) # 10 FPS

except KeyboardInterrupt:

print("Stopping...")

finally:

conveyor.stop()

feeder.stop()

picam2.stop()

if __name__ == "__main__":

main()Optimization Tips

- Lighting: Consistent, diffuse lighting prevents shadows that confuse detection

- Background: Pure white background maximizes contrast

- Camera: Use global shutter camera for fast conveyors (no motion blur)

- Processing: Resize images before processing for speed

- Batching: For TensorFlow, batch multiple parts for GPU efficiency

Resources

Use Our Tools to Go Further

Get more insights about the sets mentioned in this article with our free LEGO tools